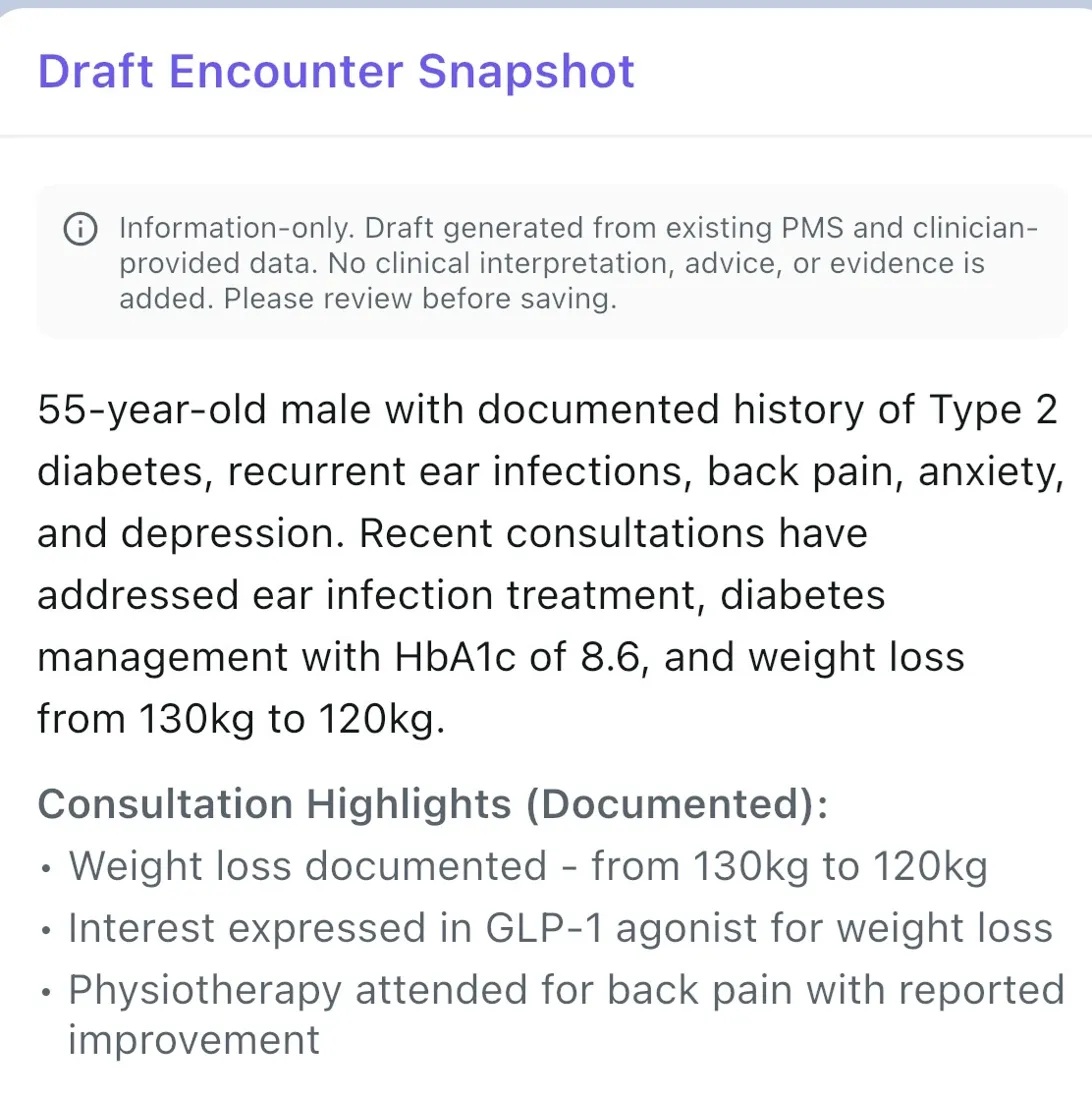

Most clinical AI tools provide recommendations, dosing guidance, and evaluative language like “strongly supported” or “first-line.” In healthcare environments with clinical governance requirements, this creates risk. Echo-Health takes a fundamentally different approach.

✓ What Echo-Health does

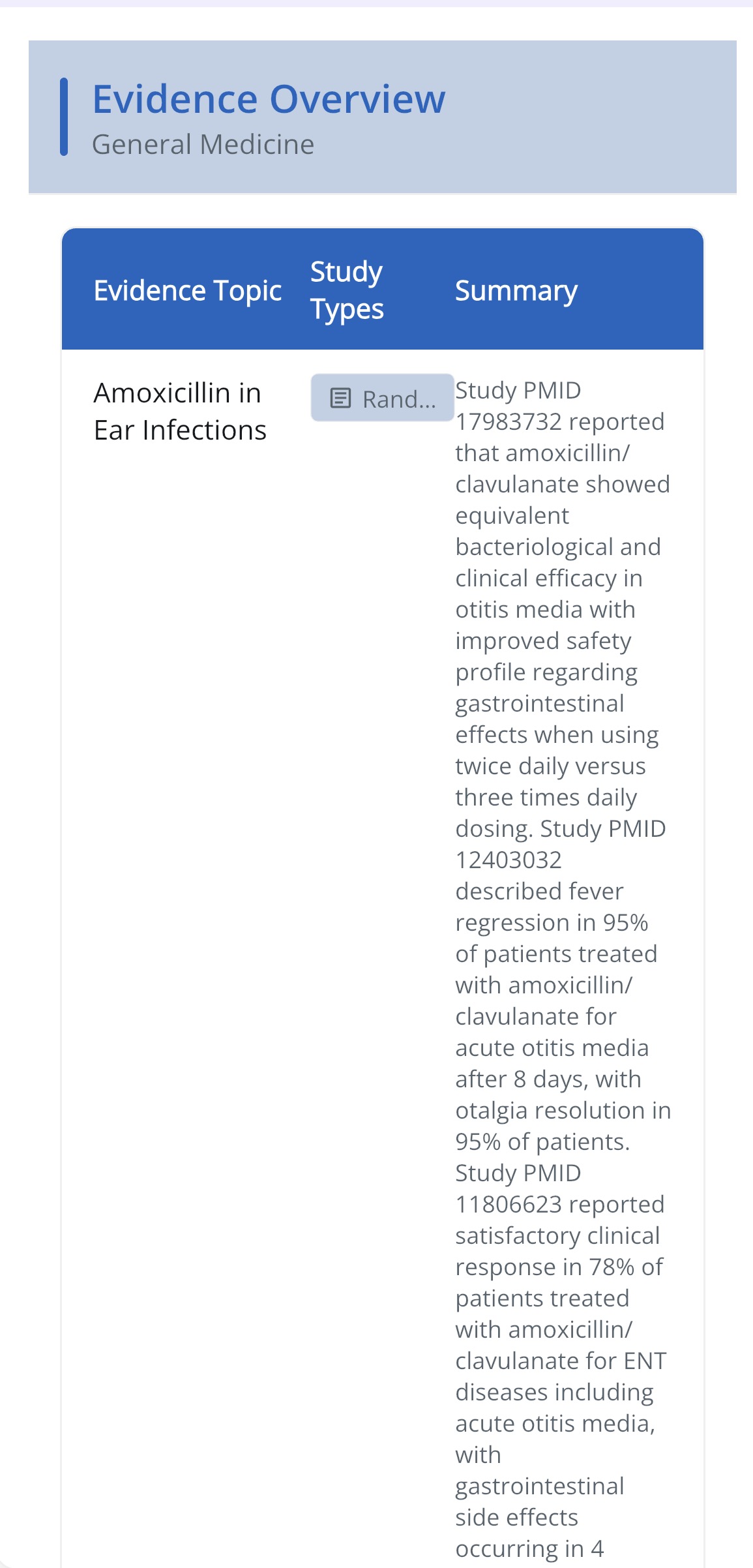

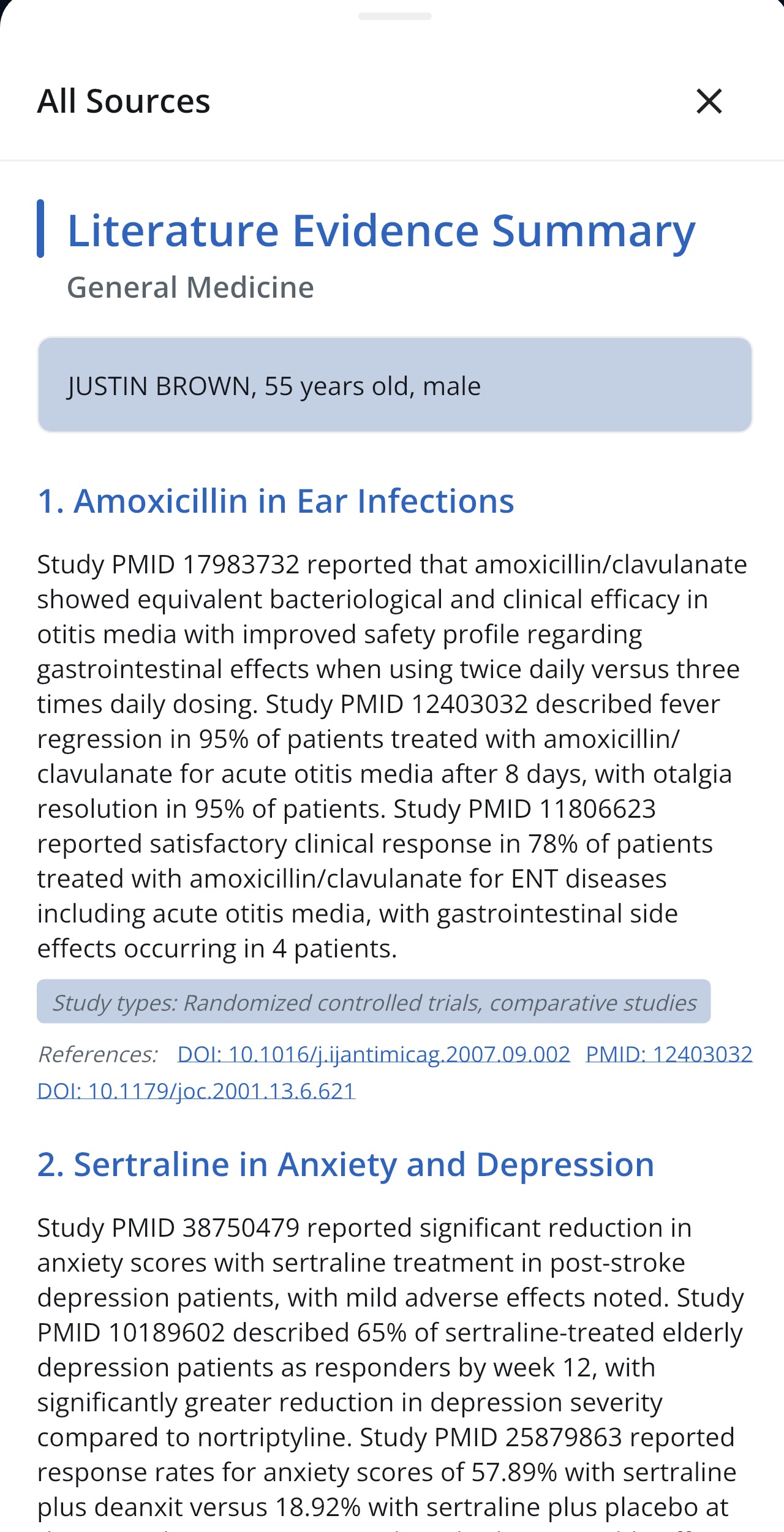

“Study A (PMID 38291045) reported a 1.2% reduction in HbA1c over 52 weeks in patients receiving empagliflozin.”

Neutral, descriptive, traceable to a specific published source.

✗ What Echo-Health never does

“Empagliflozin is a recommended first-line agent and should be started at 10mg daily.”

Evaluative, prescriptive, untraceable. This language is prohibited by the system architecture.

🚫

Forbidden Language

“Should”, “best”, “recommended”, “first-line”, “appropriate”, “safe option”, “start with”, “dose”, “titrate”, “strongly supported”, “clinical implication” — all prohibited from evidence output.

📎

Mandatory Citations

Every clinical statement must include a PMID and DOI where available. If no evidence is found, the system says so clearly — it never infers or extrapolates.

🔒

Structured Output

All evidence responses are returned as structured JSON with separate fields for the response, references, and metadata. This enables audit trails, downstream automation, and clinical governance traceability.

🛡

Advice Rejection

If a clinician asks for advice, recommendations, or instructions, the system responds: “I can only summarise the evidence from the referenced articles. Clinical decisions must be made by a qualified health professional.”

Why this matters

Software that provides clinical recommendations or dosing advice introduces clinical risk. By constraining the system to evidence retrieval only, Echo-Health's evidence layer supports clinical governance requirements while still delivering genuine clinical value at the point of care.